If you are using Azure DevOps for building and deploying your .NET core applications, then you should consider the following.

- Azure Pipelines now supports composing both the build and release stages as code. You can now combine your CI and CD pipeline definitions into a single pipeline definition that lives within the same repository as the application code.

- Turn on binary logging in MSBuild so that you receive exhaustive structured logs from the build process. You can visualize these logs using MSBuild Structured Log Viewer to inspect the build process in great detail.

- Add Coverlet, which is a cross-platform code coverage framework to collect code coverage data, and generate neat code coverage reports with Report Generator.

To demonstrate the concepts that we discussed, we will build an integrated CI/CD Azure Pipeline which will build and deploy an Azure Function to Azure. Let’s first briefly discuss the utilities that I mentioned above.

MSBuild Binary Log and Viewer

For most of us, the MSBuild process is a black box that chews source code and spits out application binaries. To address concerns with the opaqueness of build logs, MSBuild started supporting structured logging since version 15.3. Structured Logs are a complimentary feature to the file and the console loggers, which MSBuild has been supporting for a while now. You can enable binary logging on MSBuild by setting the /bl switch and optionally specifying the name of the log file, which is build.binlog in the following command.

$ msbuild.exe MySolution.sln /bl:build.binlog

The binary log file generated by executing the previous command is not human readable. The MSBuild Structured Log Viewer utility helps you visualize the logs and makes navigation through the logs a breeze.

Coverlet and Report Generator

Coverlet is a cross-platform tool written in .NET core that measures coverage of unit tests in .NET and .NET core projects. The easiest way to use Coverlet is to include the Coverlet NuGet package in your test projects and use MSBuild to execute the tests. You can use the following commands to perform the two operations.

$ dotnet add package coverlet.msbuild

$ dotnet test /p:CollectCoverage=true

Report Generator is a visualization tool that takes a code coverage file in a standard format such as Cobertura and Clover and converts it to other useful formats such as HTML. Due to its integration with Azure Pipelines, you will be able to see the code coverage results alongside build results in the same pane.

Integrated CI/CD Pipeline

Azure DevOps now supports writing Release pipelines as code. You can now compose a single definition that includes both the build and release stages and keep it in the source code. Shortly, we will build a YAML definition that includes both the build and release stages of our application.

The Sample Application

I created a simple Azure function named GetLoLz that returns a string with as many occurrences of the text “LoL” as requested by setting the query parameter named count of the HTTP request as follows.

[GET] http://<hostname>.<domain name>/api/GetLoLz?count=<int>

Let’s get started.

Source Code

You can download the source code of the sample application from my GitHub repository.

I used .NET Core 3.0, Visual Studio 2019, and Azure Function v2 to build the application.

The LoL Function

In Visual Studio (or VSCode), create a new Function app project named AzFunction.LoL. Create a new class file and add the following HTTP function to your project.

[FunctionName(nameof(GetLoLz))]

public static IActionResult GetLoLz(

[HttpTrigger(AuthorizationLevel.Anonymous, "get")]

HttpRequest request,

ILogger log)

{

log.LogInformation("C# HTTP trigger function processed a request.");

return int.TryParse(request.Query["count"], out var count)

? (ActionResult)new OkObjectResult($"{string.Join(string.Empty, Enumerable.Repeat("LoL.", count))}")

: new BadRequestObjectResult("Please pass a count on the query string");

}

Launch the application now and visit the following URL from your browser or send a GET request from an API client such as Postman. Since your application might be available at a different port, check the Azure Function Tool CLI console for the address of your HTTP function.

http://localhost:7071/api/GetLoLz?count=1000

Let’s now add a few tests for the function that you just created. Create a xUnit test project in your solution and add the coverlet.msbuild and coverlet.collector NuGet packages to it. Now, add a few tests to the project. For details, check out the AzFunction.LoL.Tests project in the GitHub repository of this application.

Provision Azure Resources

Let’s use the AZ CLI utility to create a Resource Group and an Azure Function App for our project. The following commands will create a Resource Group named FxDemo, a storage account named lolfxstorage, and an Azure Function named LoLFx. You can choose other names for your resources and substitute them in the commands below. Remember to use the command az login to log in before executing the following commands.

$ az group create -l australiaeast -n FxDemo

$ az storage account create -n lolfxstorage -g FxDemo -l australiaeast --sku Standard\_LRS

$ az functionapp create --resource-group FxDemo --consumption-plan-location australiaeast --name LoLFx --storage-account lolfxstorage --runtime dotnet --os-type Linux

Log in to the Azure portal and verify the status of all the resources. If all of them are up and running, then you are good to go.

Composing CI/CD Pipeline - Build Stage

Azure Pipelines automatically picks the build and release definition from the azure-pipelines.yml file at the root of your repository. You can store your code in any popular version control system such as GitHub and use Azure Pipelines to build, test, and deploy the application. Use this guide to connect your version control system to Azure DevOps. We will now define our integrated build and release pipeline.

Create a file named azure-pipelines.yml at the root of the repository and add the following code to it.

trigger:

branches:

include:

- '\*'

stages:

- stage: Build

jobs:

- job: Build\_Linux

pool:

vmImage: ubuntu-18.04

variables:

buildConfiguration: Release

steps:

- template: .azure-pipelines/restore-packages.yml

- template: .azure-pipelines/build.yml

- template: .azure-pipelines/test.yml

- template: .azure-pipelines/publish.yml

- stage: Deploy

jobs:

- job: Deploy\_Fx

pool:

vmImage: ubuntu-18.04

variables:

azureSubscription: LoLFxConnection

appName: LolFx

steps:

- template: .azure-pipelines/download-artifacts.yml

- template: .azure-pipelines/deploy.yml

I like to adopt the Single Responsibility Principle (SRP) everywhere in my application code. Azure Pipelines extends SRP to DevOps by giving you the flexibility to write the definition of individual steps that make up your pipeline in independent files. You can reference the files that make up the pipeline in the azure-pipelines.yml file to compose the complete pipeline.

You can see in the previous definition that this pipeline will be triggered whenever Azure Pipelines detect a commit on any branch of the repository. Next, we defined two stages in the pipeline named Build and Deploy that will kick-off to build and deploy the application respectively. We used the same type of agent (VM with Ubuntu image) to carry out the tasks of building and deploying our application. Even if you are using an XPlat framework such as .NET core, you should always use agents that have the same OS platform as your application servers. A common platform ensures that tests can verify the behavior of your application on the actual platform, and any platform-related issues get surfaced immediately.

As you can see that I have stored all the templates in a folder named .azure-pipelines at the root of the repository. Let’s go through the templates that we used for the build stage. The first step that will execute is restore-packages, which will restore the NuGet packages of the solution.

steps:

- task: DotNetCoreCLI@2

displayName: Restore Nuget

inputs:

command: restore

The next step in the sequence is build, which will build the solution. We will set the /bl flag to instruct MSBuild to generate a binary log. Since we want to investigate this log file later in case of issues, we will publish the file that contains the binary log as a build artifact.

steps:

- task: MSBuild@1

displayName: Build - $(buildConfiguration)

inputs:

configuration: $(buildConfiguration)

solution: AzFunction.sln

clean: true

msbuildArguments: /bl:"$(Build.SourcesDirectory)/BuildLog/build.binlog"

- task: PublishPipelineArtifact@1

displayName: Publish BinLog

inputs:

path: $(Build.SourcesDirectory)/BuildLog

artifact: BuildLog

The next step of this stage is test, which will execute all the tests. There are three individual tasks in this stage. The first task executes the tests in the AzFunction.LoL.Tests project with necessary flags set that generates code coverage report in Cobertura format in a folder named Coverage. The next step installs the Report Generator tool. The Report Generator tool takes the test report and converts it to an HTML report that would be visible in the Azure Pipelines dashboard. The final task publishes the Cobertura report that you can download later.

steps:

- task: DotNetCoreCLI@2

displayName: Run Tests

inputs:

command: test

projects: "AzFunction.LoL.Tests/AzFunction.LoL.Tests.csproj"

arguments: "--configuration $(buildConfiguration) /p:CollectCoverage=true /p:CoverletOutputFormat=cobertura /p:CoverletOutput=$(Build.SourcesDirectory)/TestResults/Coverage/"

- script: |

dotnet tool install dotnet-reportgenerator-globaltool --tool-path .

./reportgenerator -reports:$(Build.SourcesDirectory)/TestResults/Coverage/\*\*/coverage.cobertura.xml -targetdir:$(Build.SourcesDirectory)/CodeCoverage -reporttypes:'HtmlInline\_AzurePipelines;Cobertura'

displayName: Create Code Coverage Report

- task: PublishCodeCoverageResults@1

displayName: Publish Code Coverage Report

inputs:

codeCoverageTool: Cobertura

summaryFileLocation: "$(Build.SourcesDirectory)/CodeCoverage/Cobertura.xml"

reportDirectory: "$(Build.SourcesDirectory)/CodeCoverage"

The final step in the build stage is publish. This step is responsible for publishing the application as an artifact that will be used by the next stage, which is responsible for deploying the published artifact. The following code listing presents the three tasks responsible for publishing the build artifact.

steps:

- task: DotNetCoreCLI@2

displayName: Publish App

inputs:

command: publish

publishWebProjects: false

arguments: "-c $(buildConfiguration) -o out --no-build --no-restore"

zipAfterPublish: false

modifyOutputPath: false

workingDirectory: AzFunction.LoL

- task: CopyFiles@2

displayName: Copy App Output to Staging Directory

inputs:

sourceFolder: AzFunction.LoL/out

contents: '\*\*/\*'

targetFolder: $(Build.ArtifactStagingDirectory)/function

- task: PublishPipelineArtifact@1

displayName: Publish Artifact

inputs:

path: $(Build.ArtifactStagingDirectory)/function

artifactName: Function

In the previous listing, the first step executes the dotnet publish command and emits the application binaries in a folder named out. The next step copies the contents of the out folder to another folder named function in the ArtifactStagingDirectory. The final step publishes the function folder as a build artifact so that the contents of this folder can be deployed to Azure Function by the next stage.

Adding ARM Service Connection

Before we discuss the Deploy stage of the pipeline, we will take a detour to connect our Azure Pipeline to our Azure subscription to deploy our function.

Azure Pipelines allows you to define service connections so that you don’t have to store external service credentials and nuances of connecting to an external service within the tasks of your Azure Pipelines. Let’s add a service connection to Azure Resource Manager so that we can reference it within our release pipeline.

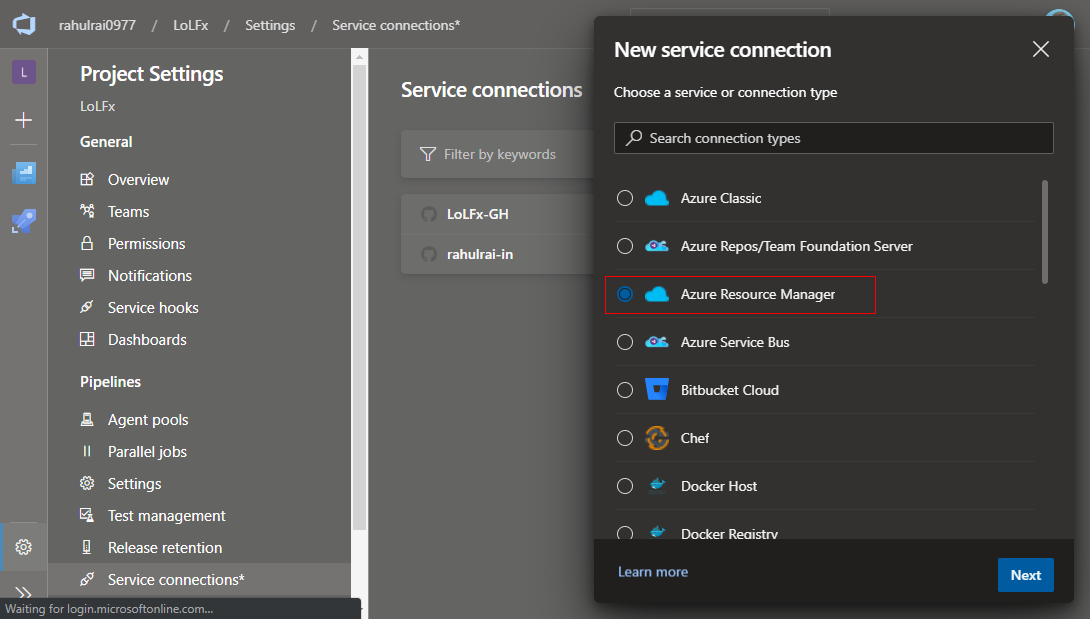

The service connection wizard is available in the Service Connection page inside the Project Settings page. You can refer to the documentation of service connection to find your way to this wizard. I will walk you through the steps that I took for creating an Azure Resource Manager service connection to my subscription. In the service connection wizard, select Azure Resource Manager as the service that we want to connect with.

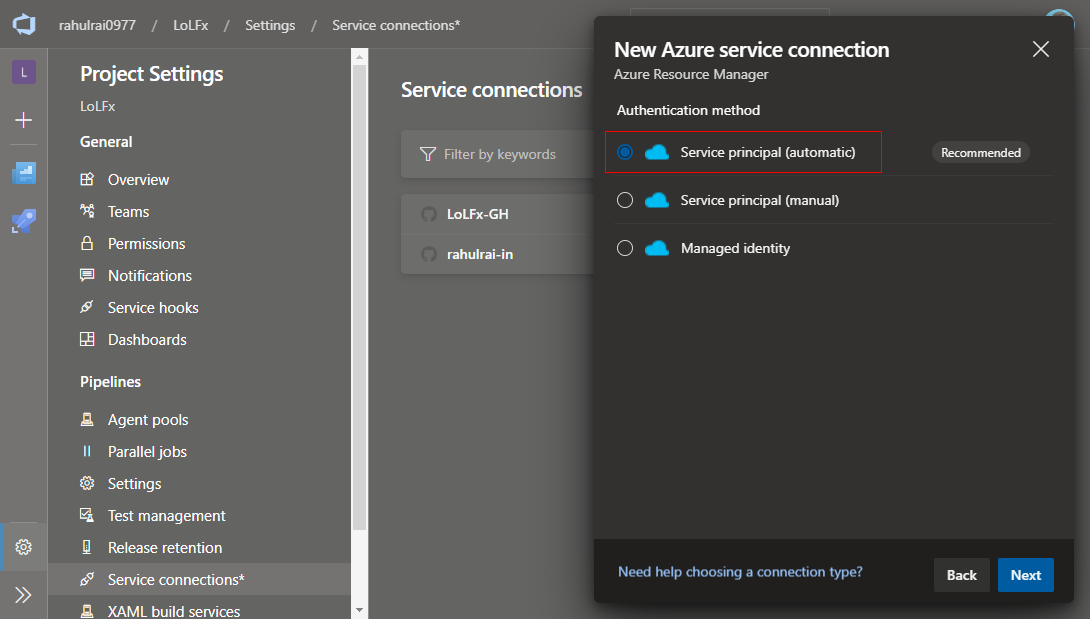

Next, we need to provide a service principal or generate a Managed Identify in Azure AD, which will allow this service connection to connect to our Azure subscription. Select the Service principal (automatic) option so that the wizard can generate and store the service principal automatically.

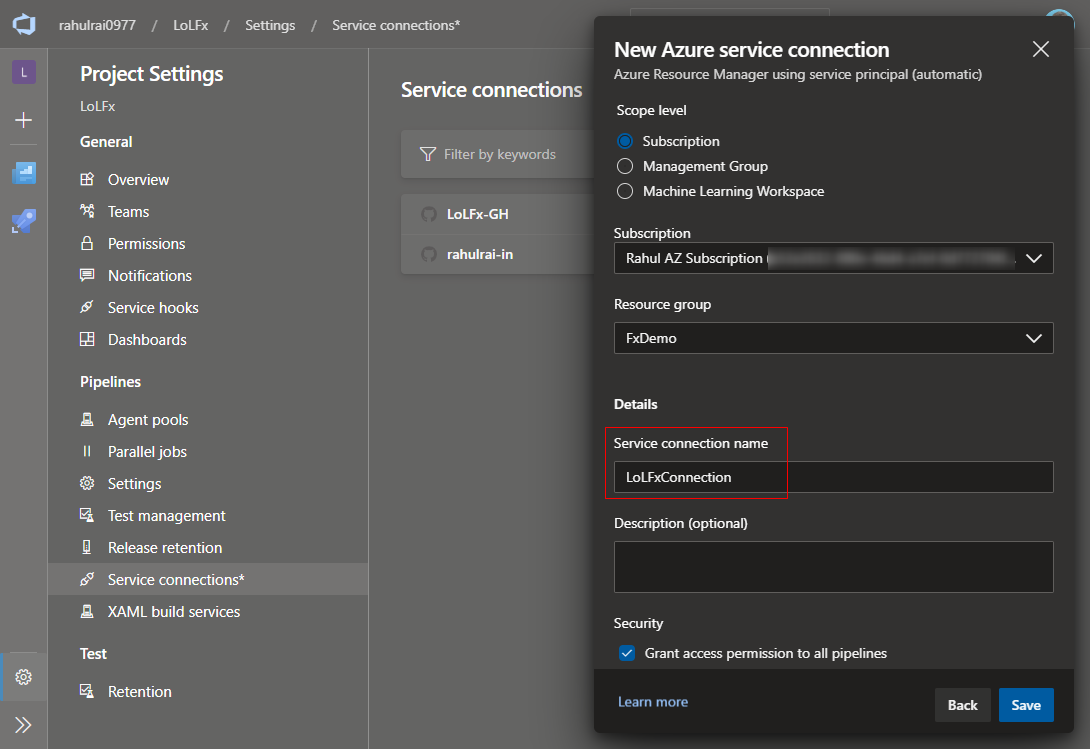

In the next step of the wizard, set the scope of connection to Subscription. After selecting the option, the wizard will populate the next input controls with subscriptions and resource groups that you can access. After selecting the subscription and resource group that you created previously, enter a name for this connection. The name that you assign to the connection will be used in the pipeline to refer to the service connection, which will abstract all the connection nuances from the task. I named my service connection LoLFxConnection.

After saving the information, we will be able to use the name of the connection LoLFxConnection to connect to Azure from our pipeline.

Composing CI/CD Pipeline - Deploy Stage

Let’s discuss the steps that make up the Deploy stage of our pipeline. The first step in this stage is download-artifact, which downloads the Function artifact from the artifact produced by the Build stage.

steps:

- task: DownloadPipelineArtifact@2

displayName: Download Build Artifacts

inputs:

buildType: current

downloadType: single

downloadPath: "$(System.ArtifactsDirectory)"

artifactName: Function

Finally, the last step in the pipeline named deploy is responsible for publishing the release artifact to Azure function.

steps:

- task: AzureFunctionApp@1

displayName: Azure Function App Deploy

inputs:

azureSubscription: LoLFxConnection

appName: "$(appName)"

package: "$(System.ArtifactsDirectory)"

Note that we referenced the Azure subscription using the name of the service connection that we created previously.

We are done with the setup now. Commit the changes and push the branch to GitHub so that Azure Pipelines can initiate the CI/CD process.

Report Generator in Action

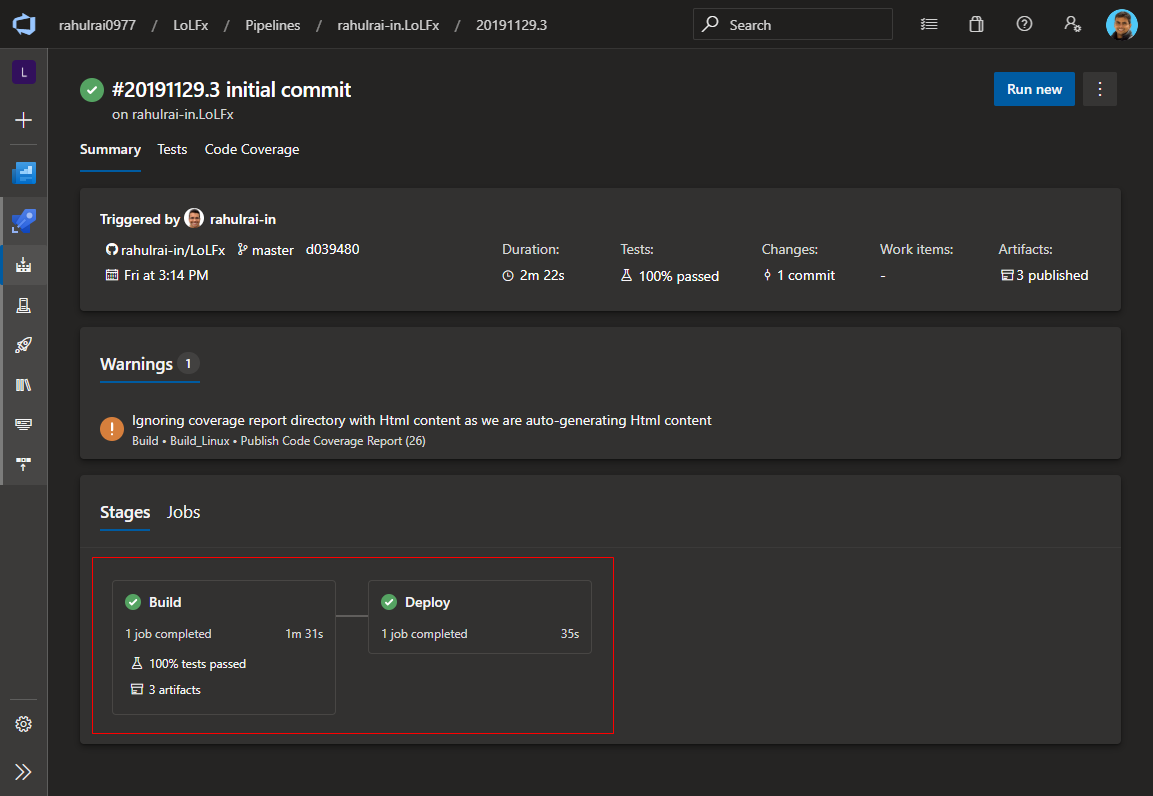

After the pipeline that we defined runs to completion, navigate to the Azure Pipelines dashboard to verify whether both the stages defined in our pipeline succeeded.

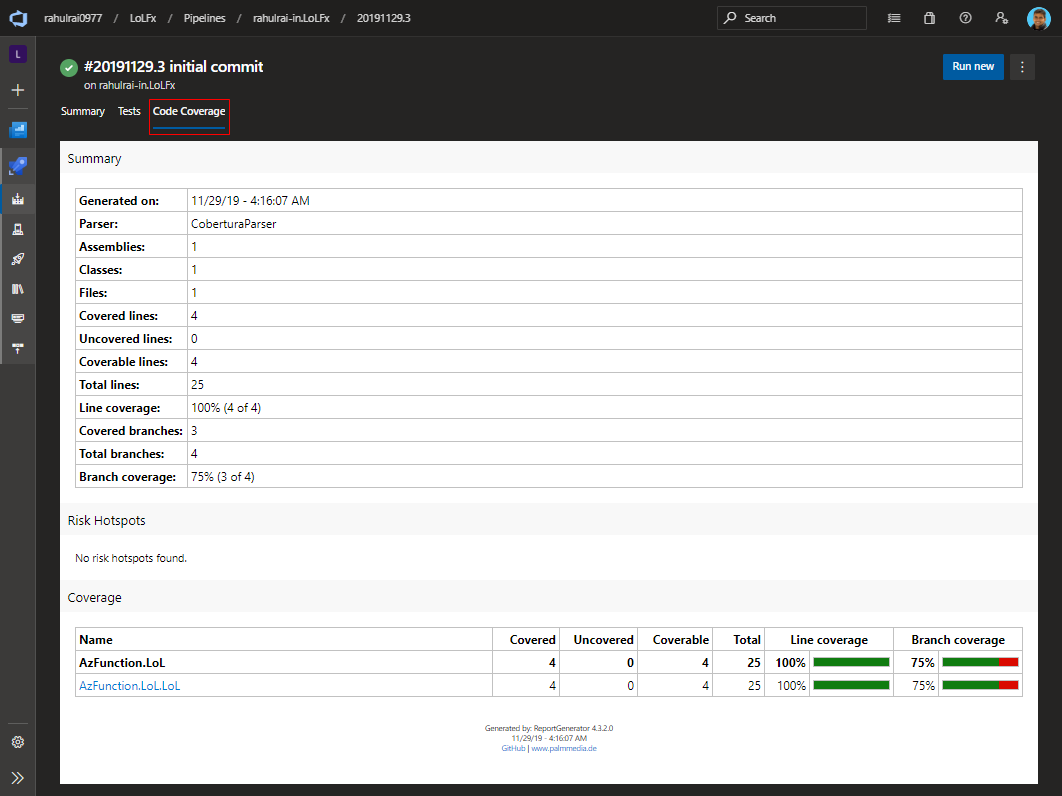

After the Build stage of the pipeline completes, you will find that a new tab named Code Coverage appears on the Azure Pipelines dashboard. The Report Generator tool generated the report that appears under this tab.

Let’s now download the binary logs generated by the build and study it with the MSBuild Log Viewer tool.

Binary Log in Action

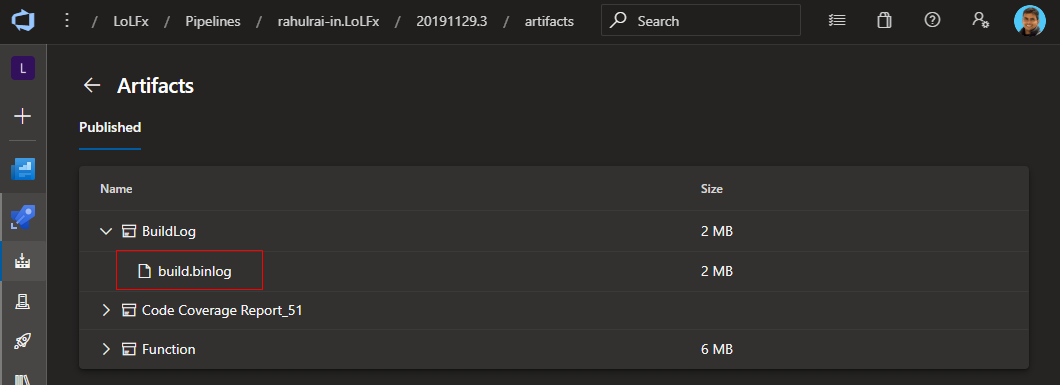

Navigate to the Artifacts published by the Pipeline and download the build.binlog file produced by the build stage of the pipeline.

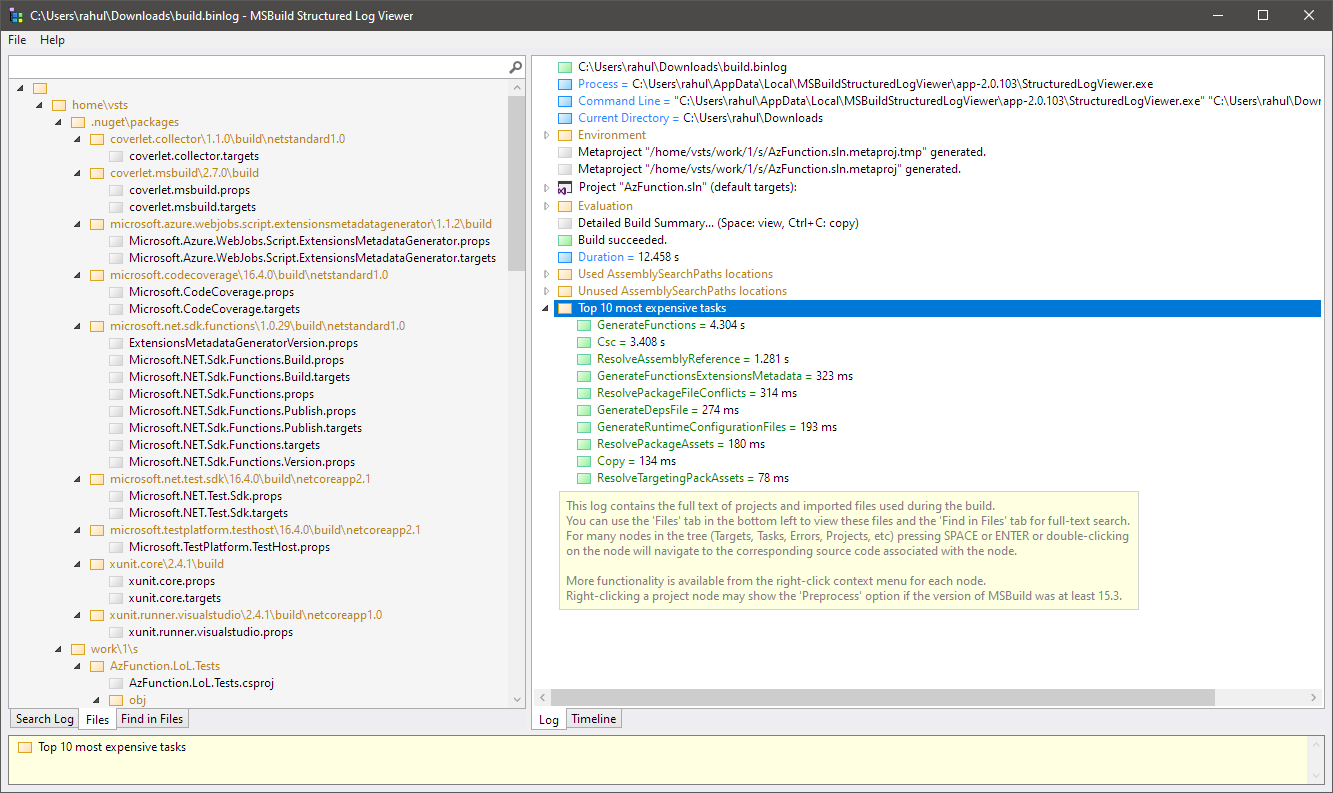

You can open this file with the MSBuild Log Viewer tool to investigate the build process. Apart from assembly reference conflicts, and the build process, you will be able to see all the Environment variables of the build server in this report and list the build tasks that take the most amount of time.

Build logs are critical for assessing issues with assembly conflicts, and diagnosing why the build is taking a long time on the server.

The LoLFx Function

I will use cURL to send a request to the function that the pipeline deployed to my Azure Function resource. Following is the command and the output generated by it.

$ curl https://lolfx.azurewebsites.net/api/GetLoLz?count=10

LoL.LoL.LoL.LoL.LoL.LoL.LoL.LoL.LoL.LoL.

To conclude, we went through the steps to compose an integrated CI/CD pipeline that builds and deploys an Azure Function. We also discussed a few essential utilities that can add value to your CI/CD pipeline viz. Binary logging, MSBuild Log Viewer, Coverlet, and Report Generator. I hope this article encourages you to evangelize these key features of Azure DevOps.

I am working on a soon to be released FREE developer quick start guide on the Istio Service Mesh. Follow me on Twitter @rahulrai_in or subscribe to this blog to access it before everyone else.

Did you enjoy reading this article? I can notify you the next time I publish on this blog... ✍